Mark Zuckerberg’s delusions regarding fake news on Facebook

Mark Zuckerberg defended Facebook this weekend regarding the role of fake news in the election. It’s way worse than he says, and he has a lot of work to do to fix it. Ignorance is the biggest problem in our democracy, and Facebook is making it worse.

Mark Zuckerberg defended Facebook this weekend regarding the role of fake news in the election. It’s way worse than he says, and he has a lot of work to do to fix it. Ignorance is the biggest problem in our democracy, and Facebook is making it worse.

First, let me ask you: was your news feed full of questionable “news” during the run-up to the election, or was it accurate? Please post a comment and share your experience.

A taxonomy of fake news

My experience, and that of the people I connect with, was that there was a lot of inaccurate crap in my newsfeed. It’s not all “fake news.” It falls into various categories:

- False statements. For example, “Trump won the popular vote,” debunked by Snopes. This actual fake news.

- Parody masquerading as fact. For example, “Caitlyn Jenner joins Donald Trump’s Transition Team,” from “The National Report.”

- Parody that’s clearly parody. Like The New Yorker’s Borowitz Report (“Trump confirms that he just Googled Obamacare.”)

- Slanted news. Yes, Fox News and MSNBC, but also a host of sites like Breitbart.com that selectively report facts that support their positions. (And yes, Trump just appointed Breitbart’s former executive chairman as his chief strategist.)

- Obsolete news. For example, a highly intelligent friend of mine recently posted an article about Maryland dumping the electoral college for a scheme to give all its electoral votes to the winner of the popular vote if enough other states do the same. This is true. It also happened in 2007, which was the date on the article.

The problem is worse because the real news is so surreal now. The FBI director is investigating Hillary Clinton’s emails on the laptop of an accused sex offender? Donald Trump said you should grab women by the pussy? Real news, but hard to believe. And when that is the real news, stuff like the Caitlyn Jenner article doesn’t seem so implausible.

Zuckerberg’s defense is weak

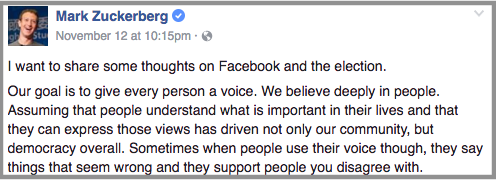

How does Zuckerberg respond to Facebook’s role in this? With denial. Here’s some of his statement posted on Facebook. I added the bold to show weasel words.

After the election, many people are asking whether fake news contributed to the result, and what our responsibility is to prevent fake news from spreading. These are very important questions and I care deeply about getting them right. I want to do my best to explain what we know here.

Of all the content on Facebook, more than 99% of what people see is authentic. Only a very small amount is fake news and hoaxes. The hoaxes that do exist are not limited to one partisan view, or even to politics. Overall, this makes it extremely unlikely hoaxes changed the outcome of this election in one direction or the other.

Sorry, Zuck, this is bullshit. Any time you see the word “deeply,” your bullshit detector should go off. In this case, here’s why he’s wrong.

- While it’s true that there are fake news stories from all political viewpoints, that’s not what people see. Because of the Facebook algorithm, they only see fake news from the viewpoint that matches their own. If you’re liberal, you see fake news that confirms your belief, and the reverse is true for conservatives.

- I saw hundreds of fake stories — a lot more than 1%. And it was clear that my friends who shared them were duped into believing they were real (or worse yet, didn’t care if they were real when they spread them).

- Zuckerberg’s definition of “fake” is too weak — it doesn’t include parodies, slanted news, or obsolete news. It’s not just a fake news problem.

- Facebook can’t even solve its fake ads problem. It’s ads are often sites with false claims (Sly Stallone is dead) and fake logos, masquerading as legitimate news sites to sell nutritional supplements.

- In a close election like this, it only take a few weak-minded, ignorant idiots to change the outcome.

How fake news changed the results of the election

Consider a state like Pennsylvania, for example. Trump won by 64,000 votes out of 6 million votes cast, or 1% of the total vote. Imagine, for the sake of argument, that 2.8 million people voted for Hillary Clinton because of a belief based on the truth, and 2.8 million voted for Donald Trump based on a true perception of him.

That leaves 2% of voters who don’t have a true perception of either candidate. Where did they get their information?

Is it that hard to believe that 2% of the people in Pennsylvania, or anywhere, are weak-minded idiots? I know they exist, because I’ve seen them on the news and in my Facebook feed. If you believe the stupidest 2% of Pennsylvanians are still smart enough to spot fake news, you’ll have to convince me why.

If fake or distorted news drives more weak-minded, ignorant idiots in Pennsylvania to vote Trump — or drives more idiots leaning toward Clinton to give up, stay home, or vote for a third party — he wins the state. Notice that you don’t have to believe all or even most of the Trump voters believe this stuff. All it takes a few ignorant people in a few swing states to make the difference.

Did that happen in Pennsylvania, Wisconsin, Michigan, Ohio, North Carolina, and Florida? For that matter, did it happen in Clinton’s favor in New Hampshire, Colorado, Nevada, or Colorado? It’s certainly plausible.

How to solve the problem

Zuckerberg says he’s working on the problem:

This is an area where I believe we must proceed very carefully though. Identifying the “truth” is complicated. While some hoaxes can be completely debunked, a greater amount of content, including from mainstream sources, often gets the basic idea right but some details wrong or omitted. An even greater volume of stories express an opinion that many will disagree with and flag as incorrect even when factual. I am confident we can find ways for our community to tell us what content is most meaningful, but I believe we must be extremely cautious about becoming arbiters of truth ourselves.

It is complicated. We need a system (like Verytas, the browser extension that Sam Mallikarjunan proposed) that marks sites and articles as fake, satirical, slanted, or obsolete, using a community-based system. The system needs to rate the raters as well as the articles, to ensure that partisans on one side or the other can’t skew it. (Only if you are left-right balanced in your news scoring will your ratings have weight.)

This isn’t easy. But it is necessary. All of the skullduggery on these sites is at Facebook’s feet. You can blame the partisan fake-news spreaders all you want. But you can’t stop them, any more than you can stop a cockroach infestation by stepping on them. You need to clean up the kitchen. It’s Facebook’s kitchen to clean up.

I’d like to start a non-profit, non-partisan tech organization to force this change. Are you with me? Add your name in the comments, along with the skills you bring.

Before and since the election, my newsfeed is full of bullshit, and it really is hard to decide which is which. Sorry, that’s not true – it’s tiring to decide which is which, because I have to read it to know. I haven’t seen much parody that isn’t obvious, but I have seen a lot of posts of outdated material – today I saw a news report about the Senate rejecting a bill that would have boosted veteran’s benefits. It’s accurate; but it’s from 2014, so why post it now? The list is long, but I’d estimate that at least 50%, and more like 70%, of what I saw was either slanted or outright bullshit – and I’d say both sides were about even but with somewhat more pure nonsense from the right.

Yes – old news. It confuses people. Not all sites have the date of publication clearly stated.

We are not a Democracy but a Republic. Get that fact straight.

I agree, Zuckerberg is full of crap and tap dances issues so he can continue to build wealth from useful idiots using FB.

Yes, we are a republic. We are not a direct democracy. But we are a democracy too — we decide on our leaders by voting. The two are not mutually exclusive.

Worthy project.

I was a writer and editor at Forbes for 7 years, Wired for 8. Started buzzkiller.net in 1997. Agree that b.s. on FB had a significant effect on the election.

I agree. The primary skill I choose to bring to the table is to stop using FB (already begun).

Yes, I am with you. I have my M.S. in Library & Information Science and worked as a corporate researcher and CI professional for 14 years. I also volunteered my time for years as a community manager of social media sites for a couple of different organizations.

Definitely agree that something needs to be done. I keep telling myself I will NEVER read political articles in Facebook, but I get sucked in. My preference is to subscribe to a few balanced sources with credible journalists. But many people won’t spend the money, and get their news from Facebook. It’s Facebook where we really need to “Drain the Swamp.”

I agree!

Great plan! I want to be part of it. I’m a writer, a magazine editor, and a highly skilled researcher. I make a point of notifying people when they are reposting false or misleading information. Personally, I’m hardly willing to post a quote without verifying the source and the original context! When do we begin?

This and …

The issue with social media is that it allows for us to effectively cull out any and all opposing voices. I’m guilty of this as much as anyone. Over the course of this election cycle, I’ve either muted or outright unfriended probably 20 to 30 of my FB “friends” due to content they pushed out. Much of the content they were passing along was BS. I’d put the BS content squarely at 50-50 between my left- and right-leaning friends. I was weary of seeing it, fact checking it, and showing them how it was wrong as this just led to a digital shouting match. “Well that’s what the [left/right]-leaning media wants you to think” was the basic response. It became easier to mute/unfriend than it was to type a rebuttal.

But the “ignorance cleansing” I enacted on my feed created a narrow channel of like-minded viewpoints. I still saw BS, only I suppose this BS smelled better to me since it was on my side of the fence? This is not how a democracy/republic/whatever should work, at least not in my mind. We can’t just listen to voices from one side, but that’s what social media allows us to do. We end up with a “news” feed which preaches to the choir we stand in. This, in turn, makes it easier to get polarized to one view. In an effort to bring us all together, social media has only ripped us further apart by allowing us to effectively segregate ourselves to like-minded groups.

I recently opened up my news feed by unmuting the muted and re-friending the unfriended (those that would have me back). All the bile, hate and ignorance returned to my feed. Is this what a functioning democracy sounds like in the 21st century?

I am not sure I have any skills to offer, but I’m a heavy user of facebook. I am, however, a writer, and I have to say that when I began reading this blog (I believe since the first post) it hadn’t yet occurred to me how political – not partisan, but political – your message is. Clarity of purpose, truth in interactions – these are things we should expect from business and political leaders.

Count me in. Skills include being an avid Facbook user, being committed to truth and facts (smug liberalism to be damned), project management and other digital marketing stuff.

On Shark Tank a couple of months ago, a young woman brought an app she had coded which seeks to stop online bullying by recognizing hate words in emails, texts and social media posts and triggering a popup that says, “Are you sure you want to post this?” Apparently asking the question and suggesting alternative sentences was incentive enough to stop bullying posts.

Similarly, Facebook should scrape content you share for hate speech, date of the post, legitimacy of the site, map against a Snopes filter and ask people if they really want to post it. If they still do, then it has a big red question mark on the post indicating its veracity is questionable. We let public shaming handle the rest.

Thoughts?

I’ll help with marketing. We will find that asking people to like reality and facts is a bit like asking them to eat their vegetables. The product will need to provide some joy along with the nutritional value for users on both the right and the left– and this joy needs to be be baked-in to the product, not just tacked on at the end.

If you need anything done especially in Nothern Europe, count me in. Been in SaaS/Online business for more than a decade ranging from sales in media monitoring to running a listed software company, as well as many statups . Lived in the US for a couple of years in past 20 years as well as all over Eu. Have followed heavily and unbiasedly all Us elections and especially this one in awe for almost a year and half now. Having looked at it from from this side of the pond’s stand point (Finland), it is very clear that this sort of effort has to be global. I am in if needed.

Hi Josh.

I had a LOT of it in my feed. What you did not include is the meme photos from obviously partisan pages, that perpetuated and reinforced the narratives that the bogus stories carried.

What do I bring to the table?

– A writer who pays close attention to language. (You already have that covered.)

– A mostly a-political being who strives to keep his feed balanced against the headwinds of the algorithm.

– An erratic but reliable penchant for tying people together in creative ways to solve problems. {you know EXACTLY what I am talking about}

In other words, just the wild card you were hoping would emerge from the deck. 😉

Josh, I’ve been saying the exact same thing and have been thinking very hard about how to enable people to vet the accuracy of their news better. We should talk!

Count me in as a truth vigilante, or at least vigilant about what gets passed off as truth. I’m a writer, former editor, experienced project manager, retired IT executive and I’m a frequent contributor to http://www.Incredi-bull.net (I write jingles). I graduated from the Newhouse School of Journalism at Syracuse University when journalism was associated with reporting facts. The recent rise of “true facts” as an expression leaves me convinced we need to make it more difficult to perpetuate lies, half-truths and innuendo unchallenged. Whackypedia? Truth Checks Clan? Incredible bullshit?

There was a lot of fake news in my feed but as another commenter mentioned, by far the worse problem is probably 80% of the content is blatantly false memes. Since they’re images, and therefore unsearchable, the massive effect they are having is hard to measure.

Mark Zuckerberg has never used facebook if he thinks that facebook information is largely accurate. I have acquaintances on facebook that used false memes to “prove” their arguments and circulate lies, in some cases convincing others of complete bullshit.

A noble cause. I’m a graduate of Boston University’s College of Communication, currently working at a leading social media analytics company. Admittedly a data science amateur and a fledgling analyst, but a fierce devil’s advocate in search for truth. For what it’s worth, I’m also a bilingual hispanic millennial.

Let me know how I can help.

I’m in! I was a newspaper editor in a past life (1985 to 1989) on two country newspapers here in Oz. Of course, my being Australian should be skill enough.

“For that matter, did it happen in Clinton’s favor in New Hampshire, Colorado, Nevada, or Colorado? ” The second Colorado would be the one where voters did a few cones before going to vote! 😉

Count me in on your change forcing non-partisan organisation. I quit FB 3 years ago because I couldn’t take the horse-dung anymore.