Biden claimed Facebook is killing people. Facebook’s response missed the point.

On Friday, President Biden said social media platforms including Facebook are “killing people” with misinformation about COVID-19. Facebook’s response: “It’s not us, really.” Facebook’s statement is classic deflection; I’ll deconstruct it for you.

On July 15, Vivek Murthy, the US Surgeon General, made a public statement about virus misinformation, which he called “an urgent threat to public health.” His 22-page report describes how, as late as May of this year, 67% of unvaccinated adults had heard at least one virus myth and either believed it or thought might be true. This was the context for Biden’s statement.

Causation is never simple

When you ask why something happened, there is rarely a single cause. This is crucial to understand as we take action to halt the spread of the virus.

Consider a simple situation. I’m walking into my kitchen and one of my children has left a shoe on the floor. I trip on the shoe, fall, and break my wrist. What was the cause of the accident?

Was it because my bones are weak? Because I awkwardly broke my fall with my wrist? Because I wasn’t alert enough to what was on a floor? Because my children were careless in leaving a shoe around? Because my wife didn’t pick it up? Because we didn’t train our children to be more orderly? Because the shoe was nearly the same color as the floor?

Maybe it was because I was preoccupied with considering the state of social media instead of watching my surroundings.

Nothing has a single cause. Nothing. “Why” questions always have multiple answers. And if you are asking how to fix a problem as complex as a pandemic, the solution is never to fix a single cause alone.

Analyzing Facebook’s response

Here’s what Facebook wrote to address Biden’s statement and my analysis of their response.

Moving Past the Finger Pointing

July 17, 2021

By Guy Rosen, VP of Integrity

Let’s start with the title. The whole point of this exercise is for Facebook to say “Not us!” But with complex causes, there are always plenty of people to point the finger at. The fact that others are also to blame doesn’t lessen Facebook’s role in the spread of misinformation that is interfering with vaccination, masks, social distancing, and other elements of protecting America against the spread of the virus.

At a time when COVID-19 cases are rising in America, the Biden administration has chosen to blame a handful of American social media companies. While social media plays an important role in society, it is clear that we need a whole of society approach to end this pandemic. And facts — not allegations — should help inform that effort. The fact is that vaccine acceptance among Facebook users in the US has increased. These and other facts tell a very different story to the one promoted by the administration in recent days.

The Biden administration is blaming a whole lot of people, not just social media companies, but the social media companies are a crucial link in the chain of spreading misinformation.

While it may be true that “we need a whole of society approach to end this pandemic,” that doesn’t let Facebook off the hook. Without Facebook and other social media companies, misinformation cannot easily spread. So social media is a crucial link the chain of misinformation.

Here’s an analogy. Suppose I told you that large, crowded events were causing the spread of COVID. An event venue replies, “we need a whole of society approach to end this pandemic.” While that may be true, it doesn’t mean that crowded events full of unvaccinated people should continue without any modification.

Facebook says, “The fact is that vaccine acceptance among Facebook users in the US has increased.” Certainly, that is true. But the problem is the significant number of remaining vaccine resisters. “We’re moving in the right direction” is not equivalent to “Facebook has no blame for the remainder of the misinformation problem.”

Since April 2020, we’ve been collaborating with Carnegie Mellon University and University of Maryland on a global survey to gather insights about COVID-19 symptoms, testing, vaccination rates and more. This is the largest survey of its kind, with over 70 million total responses, and more than 170,000 responses daily across more than 200 countries and territories. For people in the US on Facebook, vaccine hesitancy has declined by 50%; and they are becoming more accepting of vaccines every day.

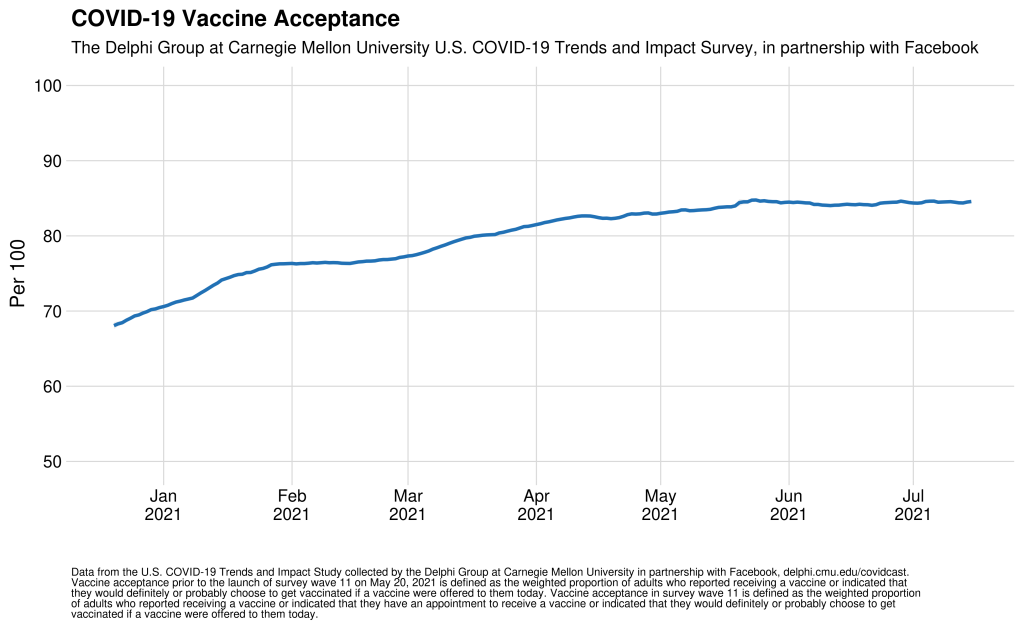

Since January, vaccine acceptance on the part of Facebook users in the US has increased by 10-15 percentage points (70% → 80-85%) and racial and ethnic disparities in acceptance have shrunk considerably (some of the populations that had the lowest acceptance in January had the highest increases since). The results of this survey are public and we’ve shared them — alongside other data requested by the administration — with the White House, the CDC and other key partners in the federal government.

Resistance remains high enough to derail the push to herd immunity. Let’s assume that Facebook’s statement about a 50% reduction in vaccine hesitancy, presumably since January, is correct. Of course, in January, there was a lot more ignorance and very little information about vaccines. Now there is a lot more information, information that has won over many people. What’s left are the people most susceptible to misinformation — a problem that Facebook continues to contribute to.

The data shows that 85% of Facebook users in the US have been or want to be vaccinated against COVID-19. President Biden’s goal was for 70% of Americans to be vaccinated by July 4. Facebook is not the reason this goal was missed. [Bold in original]

The statement “Facebook is not the reason this goal was missed” does not contradict “Facebook has contributed to missing the goal.” Things have more than one reason.

Examine this chart carefully. Vaccine acceptance among Facebook users has leveled off in May and is no longer increasing. Could misinformation circulating on Facebook be part of the reason?

In fact, increased vaccine acceptance has been seen on and off Facebook, with many leaders throughout the US working to make that happen. We employed similar tactics in the UK and Canada, which have similar rates of Facebook usage to the US, and those countries have achieved more than 70% vaccination of eligible populations. This all suggests there’s more than Facebook to the outcome in the US.

Absolutely, there is more than Facebook to the outcome in the U.S. It is a deadly mix of antigovernment sentiment, political polarization that leads to distrust of the Biden administration, media figures like Tucker Carlson and politicians like Marjorie Taylor Greene spreading vaccine doubt, and social media spreading the misinformation. The American populace is not the same as the UK or Canada. But that doesn’t mean Facebook is blameless.

Now vaccination efforts are rightly turning to increasing access and availability for harder-to-reach people. That’s why we recently expanded our pop-up vaccine clinics in low-income and underserved communities. To help promote reliable vaccine information to communities with lower access to vaccines, we are using the CDC’s Social Vulnerability Index. This is a publicly available dataset that crisis and health responders often use to identify communities most likely to need support, as higher vulnerability areas have had lower COVID-19 vaccination coverage.

We have been doing our part in other areas, too:

– Since the pandemic began, more than 2 billion people have viewed authoritative information about COVID-19 and vaccines on Facebook. This includes more than 3.3 million Americans using our vaccine finder tool to find out where to get a COVID-19 vaccine and make an appointment to do so.

– More than 50% of people in the US on Facebook have already seen someone use the COVID-19 vaccine profile frames, which we developed in collaboration with the US Department of Health and Human Services and the CDC. From what we have seen, when people see a friend share they have been vaccinated, it increases their perceptions that vaccines are safe.

– We’re continuing to encourage everyone to use these tools to show their friends they’ve been vaccinated. For those who are hesitant, hearing from a friend who’s been vaccinated is undoubtedly more impactful than hearing from a large corporation or the federal government.

Facebook good works in expanding access to vaccines and spreading positive information doesn’t let them off the hook for spreading misinformation. If your husband dies because he believed something inaccurate that he read on Facebook and didn’t get vaccinated, it’s not much comfort to know that a person in the community next door got vaccinated with Facebook’s help.

And when we see misinformation about COVID-19 vaccines, we take action against it.

– Since the beginning of the pandemic we have removed over 18 million instances of COVID-19 misinformation.

– We have also labeled and reduced the visibility of more than 167 million pieces of COVID-19 content debunked by our network of fact-checking partners so fewer people see it and — when they do — they have the full context.

Let’s assume these numbers are accurate. They don’t speak to how much misinformation Facebook didn’t stop. Did they block 90% or only 10%? It doesn’t say.

In fact, we’ve already taken action on all eight of the Surgeon General’s recommendations on what tech companies can do to help. And we are continuing to work with health experts to update the list of false claims we remove from our platform. We publish these rules for everyone to read and scrutinize, and we update them regularly as we see new trends emerge.

“Taken action on” are weasel words. What is Facebook actually doing? How well is it enforcing its own rules? How much misinformation continues to spread? It doesn’t say.

The Biden Administration is calling for a whole of society approach to this challenge. We agree. As a company, we have devoted unprecedented resources to the fight against the pandemic, pointing people to reliable information and helping them find and schedule vaccinations. And we will continue to do so.

So you’re just claiming victory and moving on?

Not good enough.

The Crowdtangle dodge shows what’s missing here

Facebook acquired an analytics tool called CrowdTangle in 2016. It showed how much misinformation was spreading.

The team that runs it was just scattered to the winds, so that Facebook would not publicly share nearly as much data about the spread of misinformation on the platform. As the New York Times’ Kevin Roose reports:

[Facebook marketing and analytics executives] argued that journalists and researchers were using CrowdTangle, a kind of turbocharged search engine that allows users to analyze Facebook trends and measure post performance, to dig up information they considered unhelpful — showing, for example, that right-wing commentators like Ben Shapiro and Dan Bongino were getting much more engagement on their Facebook pages than mainstream news outlets.

. . . CrowdTangle and its supporters lost. . . [T]he CrowdTangle story is important, because it illustrates the way that Facebook’s obsession with managing its reputation often gets in the way of its attempts to clean up its platform.

Here’s the most important paragraph in that article, in my opinion:

Mr. Zuckerberg is right about one thing: Facebook is not a giant right-wing echo chamber.

But it does contain a giant right-wing echo chamber — a kind of AM talk radio built into the heart of Facebook’s news ecosystem, with a hyper-engaged audience of loyal partisans who love liking, sharing and clicking on posts from right-wing pages, many of which have gotten good at serving up Facebook-optimized outrage bait at a consistent clip.

CrowdTangle’s data made this echo chamber easier for outsiders to see and quantify. But it didn’t create it, or give it the tools it needed to grow — Facebook did — and blaming a data tool for these revelations makes no more sense than blaming a thermometer for bad weather.

So let’s review.

Facebook has done a lot to help slow the spread of the virus.

Vaccine hesitancy is down, but not dropping any further.

Facebook is blocking some virus mininformation.

But lots of misinformation is still spreading on Facebook, and it is interfering with efforts to slow the spread of the virus.

And Facebook is now hiding the tools that quantify the spread of that misinformation.

Is Facebook killing people? That’s a “why” question, which is hard to answer.

But Facebook is certainly not blameless. And as a result of the weakness of its efforts, more people are going to die.

Sounds like the playbook has not changed for years. I wish that the top leadership at the company recognized the toxic impact it was having on society.

https://www.amazon.com/Untitled-Sheera-Frenkel/dp/0062960679/ref=sr_1_1?crid=32BQPTA90AF9Y&dchild=1&keywords=an+ugly+truth&qid=1626715224&s=books&sprefix=Ugly+tr%2Cstripbooks%2C212&sr=1-1

Very good book that goes far deeper into this topic.

The whole CrowdTangle nonsense sounds like what the previous president was spouting – “don’t test so much, then our numbers won’t be so high.” Data is data. You might not like it, but it is what it is.

I couldn’t help but notice how your list of contributing factors to the pandemic/low vaccination rates implicitly assumes that it’s a right/left problem. Anti Biden? Anti government? A list of extreme right wing characters?

In an article about multiplicity of causes it screams in hi-vis colors how politically one sided your “causes” really are. I would find your entire argument more compelling if you had listed even one left-aligned party as a contributing factor to the problem. An easy one could have been the CDC’s inconsistent messaging. Not that there aren’t a whole pile more, but just one would have done it.

Just a bit confused by this. Are we supposed to let Facebook off the hook because the CDC messaging was not consistent?

Why do you assume that antigovernment sentiment and political polarization are “right wing causes?”

There is no left-wing media spreading anti-vax sentiment. They’re far from perfect, but I haven’t seen them doing anything that leads to vaccine mistrust and virus spreading.

There is an extreme wacky antigovernment set of left-wing loonies who are vaccine skeptics. It’s not large. Meanwhile, a recent poll found that 30% of Republicans are resistant to getting the vaccine, compared to 13% of the general population (https://www.pewtrusts.org/en/research-and-analysis/blogs/stateline/2021/04/23/republican-men-are-vaccine-hesitant-but-theres-little-focus-on-them).

What causes are there for that? And how is Facebook contributing to it?