Analysts, the Dunning-Kruger Effect, and the Gartner Hype Cycle

A little knowledge is dangerously misleading. That’s the message of the Dunning-Kruger effect, in which ignorant people think they’re smart. It’s the message of the Gartner Hype Cycle, in which people get overenthusiastic about new technologies. And it’s the reason that analysts, over and over again, get overenthusiastic about whatever’s new.

In 1999, the psychologists David Dunning and Justin Kruger revealed an intellectually fascinating phenomenon, one that’s familiar to anyone who’s ever learned anything. When you begin to learn to code, or to play the guitar, or to use a spreadsheet, progress is rapid. Small amounts of effort create large amounts of improvement. This creates confidence. And confidence creates the illusion that you are really, really good at what you’re learning. After a short while, you say to yourself, “I got it. I am terrific at this!”

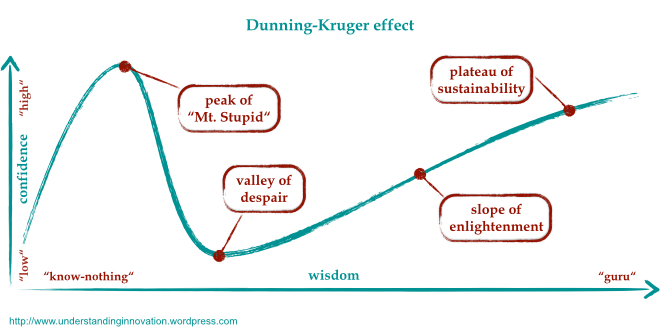

This is because you have learned just enough to perform, but not enough to realize what you don’t know. The pinnacle of this phenomenon is sometimes called “Mount Stupid.” It’s a peak because, if you continue to learn, you soon figure out that there is a mass of knowledge you don’t know. After that, the more you learn, the more you realize that you haven’t learned yet. You are sliding down the other side of Mount Stupid.

Here’s a picture by Ulf Ehlert from “Understanding Innovation,” another blog that has analyzed the parallels I’m talking about.

The Hype Cycle is also about ignorance and confidence

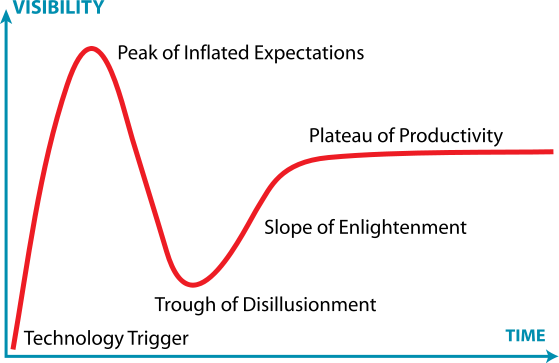

The shape of the Dunning-Kruger curve is remarkably similar to another famous graph about ignorance: the Gartner Hype Cycle.

When I first saw this as a Forrester analyst, I was immediately drawn to it (and jealous of Gartner for coming up with it). The idea is that for any technology, say, blockchain, there is a predictable evolution. As the technology first emerges, people get very excited about it. The hype builds on itself until we reach the peak of inflated expectations (which is where Bitcoin is right now). Since the product cannot keep up with the hype, this leads to inevitable articles about how overhyped it is, generating the trough of disillusionment. Then, if it succeeds at all, it does so because tech vendors and users put in the hard work to turn it from an idea into something useful.

The role of ignorance is the reason this curve is so similar to the Dunning-Kruger curve. When all you can see is the promise, you overestimate the potential. Just as in Dunning-Kruger, small increases in knowledge generate large increases in confidence. The difference here is that it is a group phenomenon, in which technology vendors, journalists, and analysts spur each other on to higher and higher degrees of hype, binding their ignorance together and riding it to a PR crescendo.

Why analysts are particularly vulnerable to Dunning-Kruger

Analysts at firms like Gartner and Forrester study technology and its applicability in business. When a technology is new, it is the analyst’s job to make sense of it. You would expect analysts, based on their time spent on research, to be particularly accurate at technology predictions. Instead, they often fall victim to the peak of inflated expectations just like everyone else. (I was certainly guilty of this when I was an analyst, particularly around technologies like interactive TV and digital video recorders). Why are the most knowledgeable people in a market — people who are dedicated to sober analysis and objectivity — sucked in?

Here’s why?

- Analysts thrive on the new. Who wants to write another report about databases? Analyst ideas need three things to take off: they must be big, new, and right. When you’re the first to identify and analyze a new tech trend, your ideas are automatically big and new — two out of three. You get lots of attention from press (who need somebody unbiased and expert to quote) and clients (who need somebody to explain the new thing). This is a strong incentive to tie yourself to things that are new exciting.

- Those who hype have venture capital and PR. If you are a vendor pushing blockchain, artificial intelligence, or whatever is new and hot, you certainly have a vested interest in getting analysts excited. So you brief them. There are very few briefings about how the new thing isn’t important, since that’s not a very interesting thing to brief an analyst on.

- Analysts have a bias toward change, because it’s more interesting to anlayze. What could blockchain mean for finance? For the rule of law? For the future of currency? For the future of security? For world demand for electricity? There are many interesting consequences to consider — lots of fodder for analysis. On the other hand, what could the non-emergence of blockchain mean? The same things we’re already doing. It’s way more fun to analyze the new thing than the failure of the new thing.

- Analysts seek examples that reinforce the new thing. When you’re writing about something new, you need proof points. This means you seek out people who are accomplishing something with the new technology. There may only be a few, but your job is to reveal what they have learned, since nobody has collected these few data points together before. This is research, and it is valuable. But it is also self-reinforcing. You don’t interview the 99% of users who are not using the new technology, since you already know what they have to say on the topic, which is usually nothing.

- The penalty for being wrong is less than the penalty for missing the trend. You could make a mistake either way. But if the new tech is overhyped, but significant, it’s going to eventually reach the slope of enlightenment — and you will still be the expert on it. What you’ll get wrong is the timing. (Remember Amara’s Law, which says that we always overestimate the effect of a technology in the short term, and underestimate it in the long term.) On the other hand, if a new technology is headed for importance and you don’t see its significance, you’re pilloried for being clueless and backward — and the other analysts get the ink, the briefings, and the client interest. If you have to choose — and you do, analysts make the call — you tend to choose the more aggressive prediction.

All of these reasons, together, make analysts susceptible to hype. And in contrast to Dunning-Kruger, whose victims have no idea they’re victims — that’s why it’s called Mount Stupid — analysts know there is a risk. They know about the peak of inflated expectations. But they do the math, at least unconsciously, and still determine that’s it’s better to be part of that peak than to miss it.

Ironically, all these trends reverse once the hype has reached a crescendo. The contrarian analyst now has strong incentives to puncture the hype, demonstrate that it’s overdone, and explain the consequences of the failure of expectations. (It’s typically not the same analyst who jumped onto the hype, but it can be.) So now they overcorrect and explain that the trend is overdone. In fact, this is just when the real knowledge of how to put the technology to work is beginning to arise, and when the need for research-based analysis is greatest.